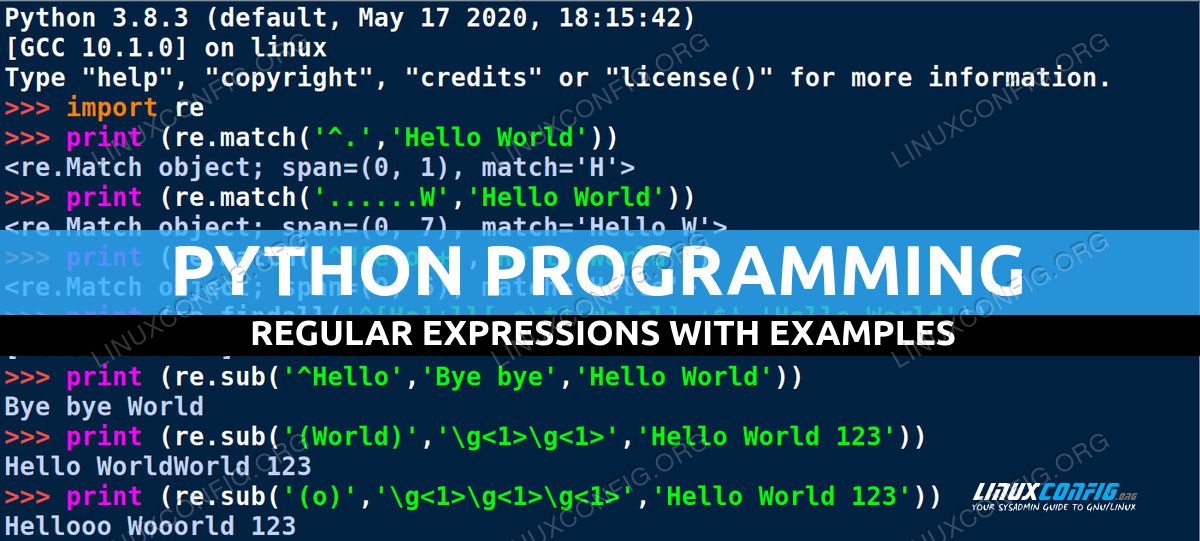

Words like a, an, but, we, I, do etc are known as stop words. Line 6: Remove any numerical values present in the dataset Line 5: Remove Punctuations special characters such as #, $, % Line 4: Remove special html characters such as website link, http/https/www Python has an inbuilt regex library which comes with any python version. This way you need don’t have to import any additional libraries. The best and fastest way to clean data in python is the regex method. Data Preprocessing/Data Cleaning using Python: Train_df.describe(): Summary of statistical terms such as mean, standard deviation, distribution of data excluding NaN values. Train_df.info(): It returns the information about the dataframe including data type of rows and columns, non-null values and memory usage. Train_df.shape(): It gives the shape of the entire dataframe (7920 rows and 3 columns) As you would have noticed in the above output, special characters like ^^, #, :), is not useful to predict the sentiment of the reviews.

The dependent variable is ‘label’ column which gives tweet sentiment as 0 (Positive) and 1 (Negative). When dealing with the text analysis process, the preprocessing step should be done for the column ‘tweet’ because we are concerned only about tweets. The dataframe has 3 columns id, label and tweet. We can use matplotlib and seaborn for better data analysis using visualization methods. Import the python libraries such as pandas to store the data into the dataframe. The ideal way to start with any machine learning problem is first to understand the data, clean the data then apply algorithms to achieve better accuracy. In the below example you will be learning about Sentiment Analysis using Python. Text can contain words such as punctuations, stop words, special characters or symbols which makes it harder to work with data. In this tutorial, you will learn how to clean the text data using Python to make some meaning out of it. Data Cleaning Techniques For NLP related Problemsĭata Preprocessing is an important concept in any machine learning problem, especially when dealing with text-based statements in Natural Language Processing (NLP). Returns, 'this is a sample text to clean' clean ( 'This is A s$ample !!!! tExt3% to cleaN566556+2+59*/133', extra_spaces = True, lowercase = True, numbers = True, punct = True ) clean_words ( "your_raw_text_here", clean_all = False # Execute all cleaning operations extra_spaces = True, # Remove extra white spaces stemming = True, # Stem the words stopwords = True, # Remove stop words lowercase = True, # Convert to lowercase numbers = True, # Remove all digits punct = True, # Remove all punctuations reg : str = '', # Remove parts of text based on regex reg_replace : str = '', # String to replace the regex used in reg stp_lang = 'english' # Language for stop words ) Examples import cleantext cleantext. To choose a specific set of cleaning operations, cleantext. To return a list of words from the text, cleantext. To return the text in a string format, cleantext. For example, stemming of words run, runs, running will result run, run, run)Ĭleantext requires Python 3 and NLTK to execute. (Stemming is a process of converting words with similar meaning into a single word. ( Stop words are generally the most common words in a language with no significant meaning such as is, am, the, this, are etc.) Remove stop words, and choose a language for stop words.Remove or replace the part of text with custom regex.Convert the entire text into a uniform lowercase.

0 Comments

Leave a Reply. |

RSS Feed

RSS Feed